Hardened, Policy-Bound, and Provable AI Agents Tested Inside a Simulated Trial

Background

Google’s Agent Development Kit (ADK) gives developers a clean, well-organized foundation for building, evaluating, and shipping AI agents. It excels at experimentation and prototyping, yet it stops short of supplying the controls that production-grade enterprise systems insist upon: identity-bound execution, cryptographic provenance, runtime policy enforcement, and tamper-resistant audit trails. This paper presents SecureADK, an extension of ADK engineered around the principle that security must be intrinsic rather than bolted on. SecureADK introduces zero-trust runtime enforcement, dataset sealing through OmniSeal, and ledger-anchored provenance via Hyperledger. To make the contrast concrete, we walk through a courtroom orchestration scenario twice,once on plain ADK, once on SecureADK,and examine the differences. The takeaway is that ADK enables agents to collaborate, but SecureADK is what makes those collaborations verifiable, auditable, and ready for regulators in domains such as the judiciary, healthcare, finance, critical infrastructure, law enforcement, and defense.

The reach of AI agents continues to expand into consequential territory: legal analysis, clinical decision support, financial automation, and regulatory reporting. Systems operating in those arenas must satisfy a strict bar: deterministic reproducibility, identity attribution, evidentiary integrity, non-repudiation, policy governance, and forensic traceability. None of this is supplied by ADK out of the box. SecureADK is designed precisely to fill that void by binding security, governance, and provenance directly into the agent runtime.

Why a Trial Simulation Reveals Trust Gaps

A simulated courtroom is a uniquely demanding environment: it is high-stakes, multi-agent, and inherently adversarial. That combination makes it an excellent laboratory for testing whether a system actually delivers on trust. The roster of agents typically includes a judge, prosecution counsel, defense counsel, a medical expert, jurors, a clerk, and an evidence processor. They must move evidence between parties, argue logically, retrieve documents, render decisions, and produce verdicts that can withstand later scrutiny. The pressure profile is remarkably similar to that faced by regulated enterprise AI systems every day.

Running the Trial on Stock ADK

How It Operates

A standard ADK courtroom run begins when the user opens the trial. From there, agents pass prompts among themselves, invoke tools directly, evaluators assign scores, and a verdict is emitted at the end.

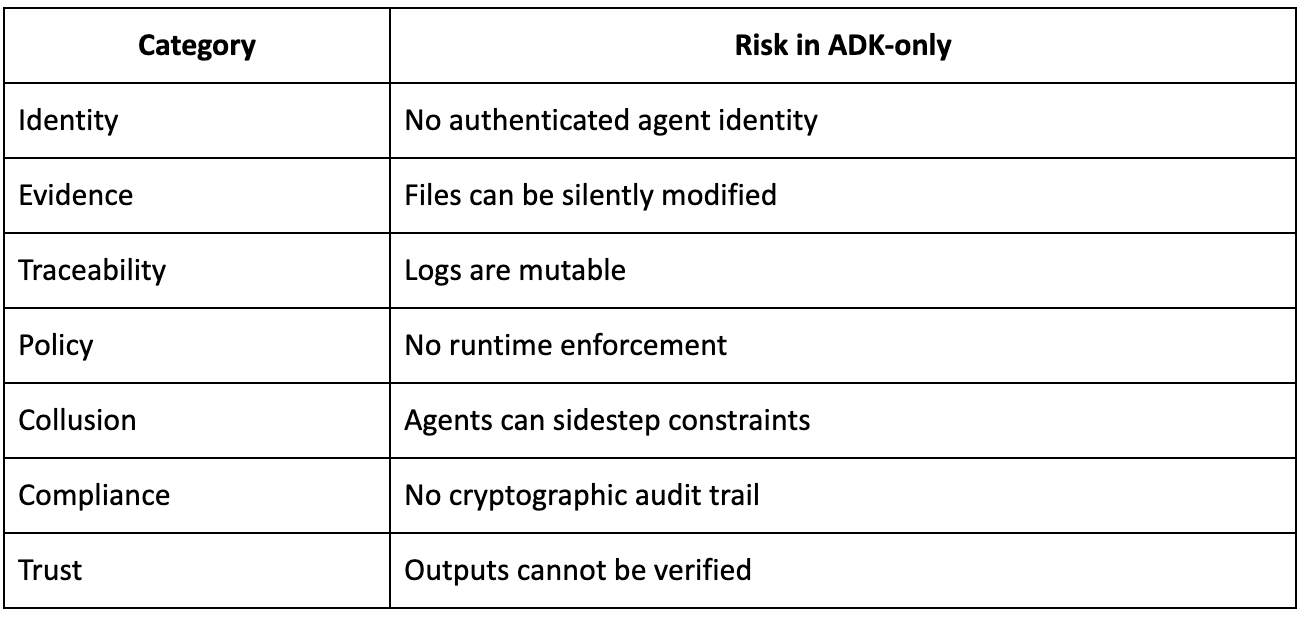

Where the Gaps Appear

Illustrative Breakdowns

- The defense agent quietly tampers with a piece of evidence.

- The medical agent relies on a dataset whose provenance has never been confirmed.

- A juror’s reasoning chain cannot be replayed.

- Tools are invoked without first checking whether the caller is authorized.

- The final verdict is impossible to audit.

The implication is straightforward: an ADK-only stack is fine for a demo, but it cannot stand up in a real courtroom or under regulatory scrutiny.

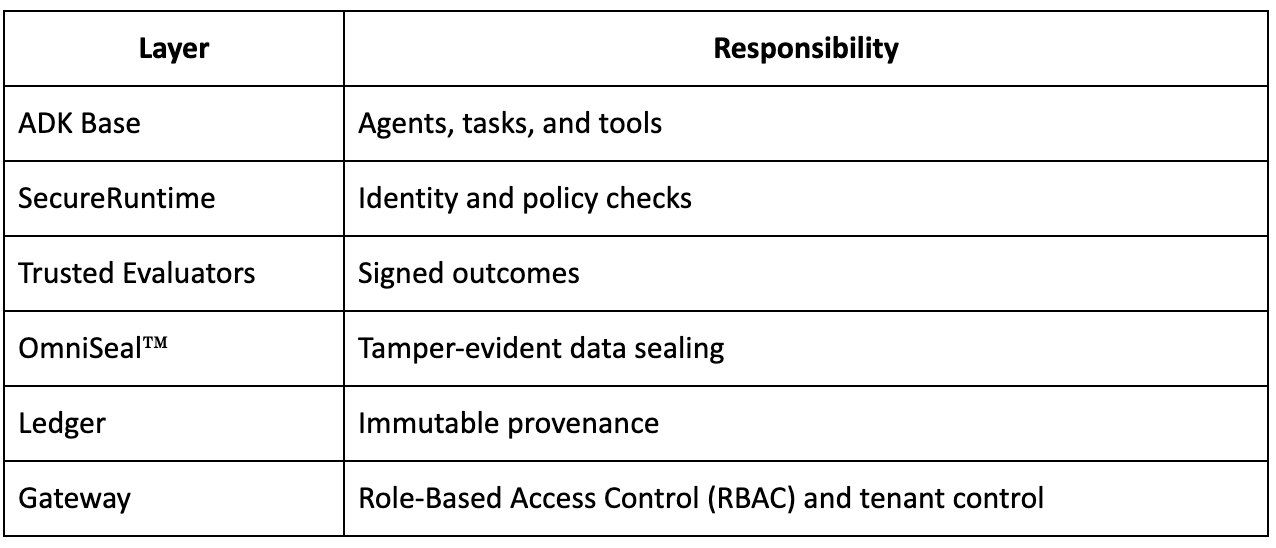

Inside SecureADK

Defense-in-Depth Stack

SecureADK is constructed around a layered architecture, with each layer carrying a distinct responsibility:

Running the Trial on SecureADK

Hardened Execution Path

- Each agent is provisioned with a cryptographic identity.

- Pieces of evidence are sealed under OmniSeal™.

- Tool invocations clear a policy gate before they are permitted.

- Evaluations are stamped with cryptographic signatures.

- Every interaction is committed to the ledger.

- The resulting verdict is sealed and fully reproducible.

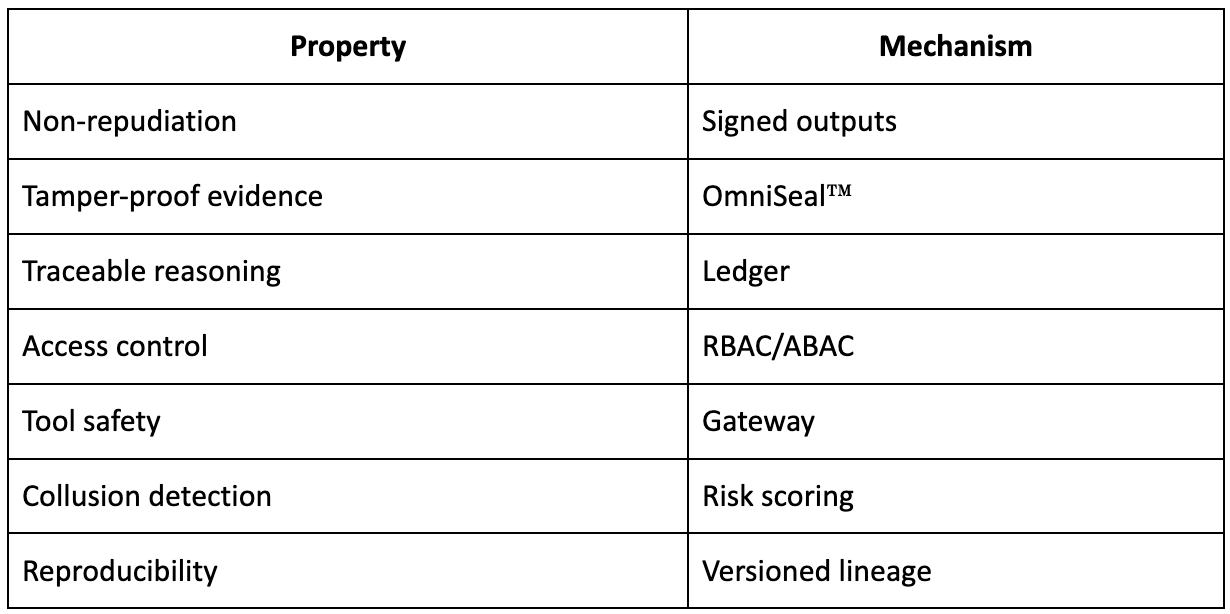

Assurances Provided

A Hardened Trial in Practice

- Evidence Handling: Each artifact is uploaded, sealed, hashed, and accompanied by a matching ledger entry.

- Prosecution Access: The agent’s identity is authenticated, policy compliance is verified, and access is restricted to read-only.

- Medical Expert: The dataset version is certified, and the evaluation carries a digital signature.

- Verdict: The judge agent signs the verdict, every contributing input is linked back to it, and the entire chain is auditable.

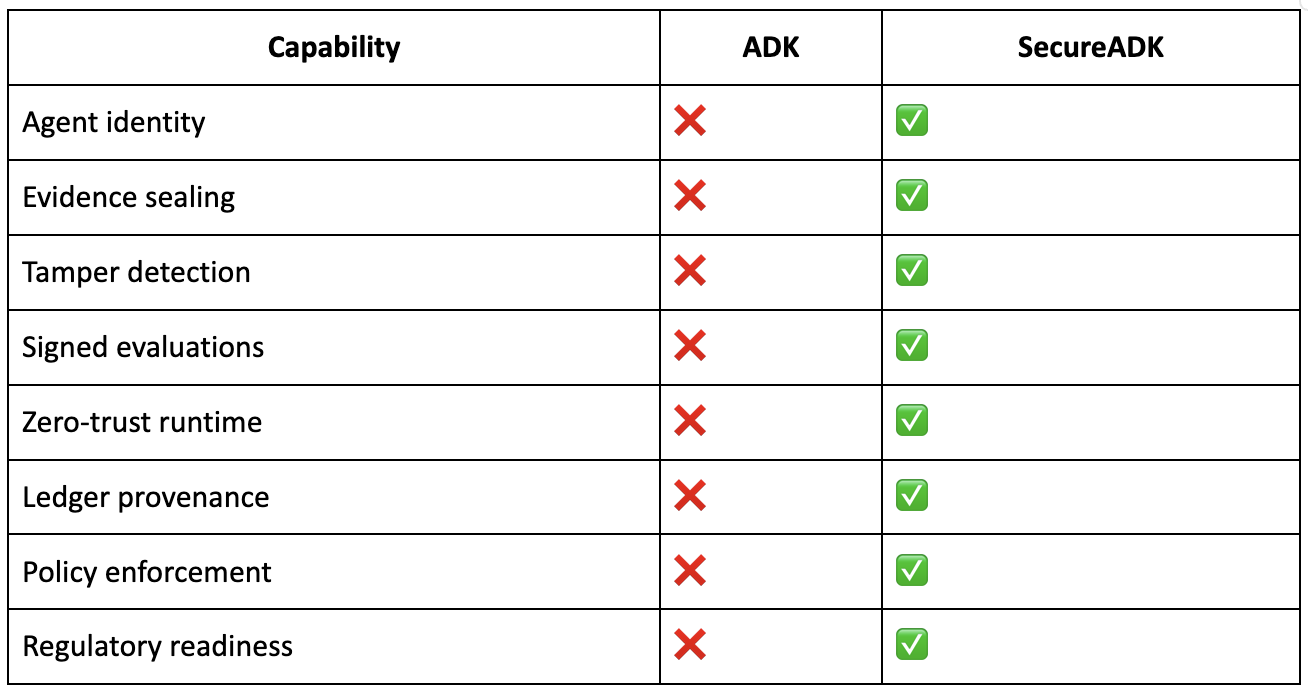

Side-by-Side Capability Review

The following table contrasts the capabilities of the two stacks:

Underlying Formal Properties

SecureADK contributes a set of formal properties to the orchestration environment:

- Integrity: Every artifact is cryptographically sealed.

- Accountability: Every action is bound to a specific identity.

- Determinism: Decision graphs can be replayed.

- Governance: Policy-as-code is enforced at runtime.

- Auditability: An immutable provenance ledger maintains a transparent record.

- Isolation: Tenant boundaries and sandboxing are preserved.

Wider Significance

- Legal Systems: SecureADK supports evidence admissibility and reproducible verdicts.

- Healthcare: Enables HIPAA-compliant AI reasoning.

- Finance: Underpins auditable trading agents.

- Defense: Establishes trusted command chains.

With SecureADK in place, an existing multi-agent ADK courtroom stack moves from simulation-grade up to forensic-grade, regulator-ready infrastructure.

Closing Perspective

SecureADK functions as a security and governance layer that sits on top of ADK. ADK delivers the underlying orchestration framework for AI agents, but it does not address the trust, compliance, and audit requirements that enterprise and regulated environments demand. SecureADK closes that gap by introducing data sealing, signed reasoning, enforced identity, end-to-end provenance logging, and regulatory compliance. Both layers are indispensable: ADK supplies the core intelligence and operational backbone, while SecureADK keeps those operations trustworthy, compliant, and auditable, producing a combined system fit for high-stakes, production-grade AI deployments.

About PureCipher Inc.

PureCipher is a leader in AI security and data integrity, dedicated to safeguarding national interests through advanced, quantum-resilient technologies. Its Artificial Immune System™ platform features OmniSeal™,a patent-pending tamper-evident technology,alongside Noise-Based Communication for stealth transmission, Fully Homomorphic Encryption (FHE)–enabled AI processing, and secure, transparent AI agents. Drawing on deep expertise in AI, quantum computing, and cybersecurity, PureCipher™ pursues its mission of building a safer and more trustworthy world.

Contact: PureCipher™ Communications

Email: media@purecipher.com

Website: www.purecipher.com